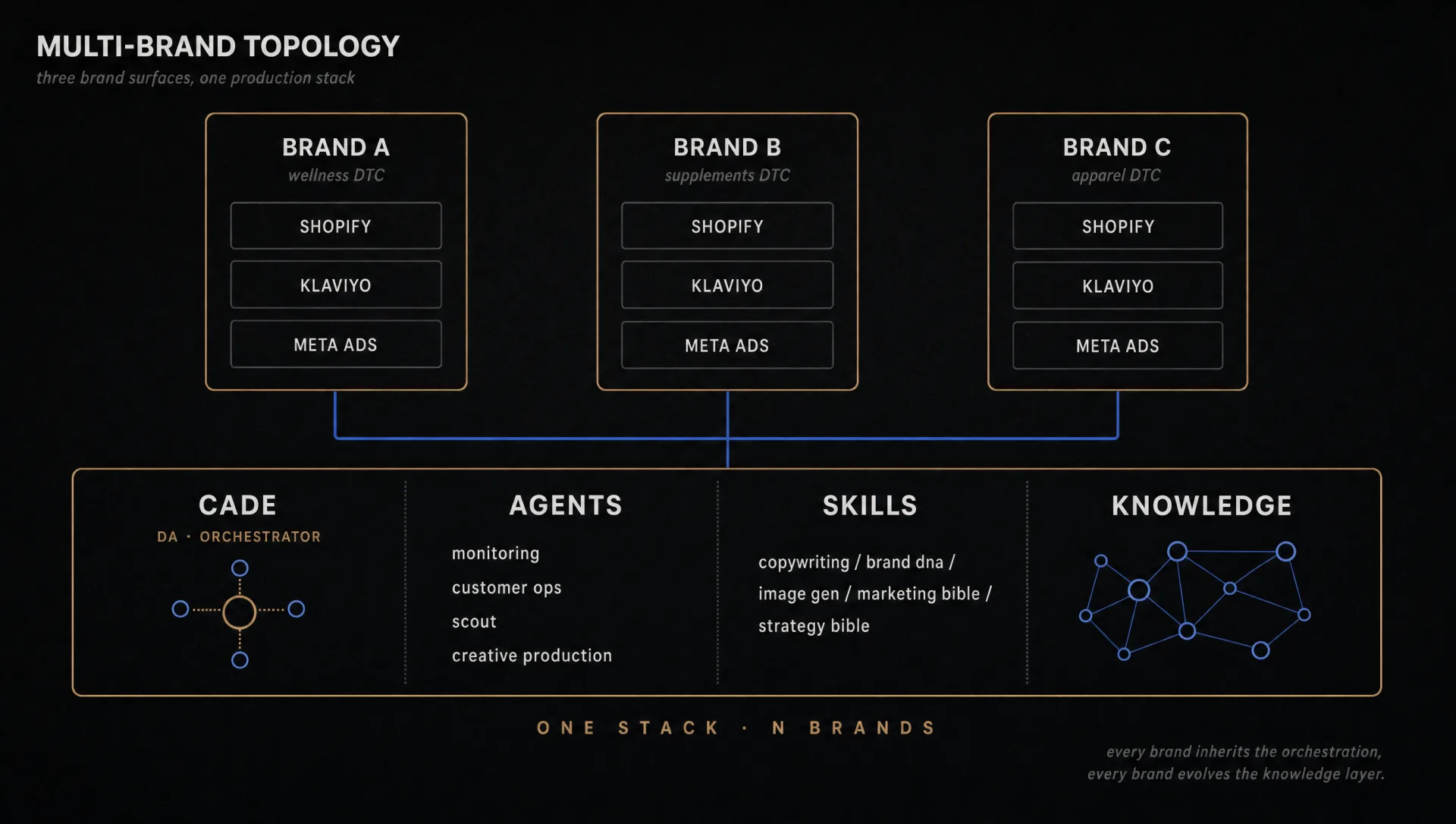

The architecture underneath the work above. A digital-assistant orchestrator coordinating four dedicated sub-agents, a knowledge layer with backlinked entity extraction, reusable system prompts loaded on demand, and a guardrail layer enforcing hard rules on every tool call. Built end-to-end. Currently runs three brand surfaces on shared infrastructure.

The work in the case studies above didn't come out of a folder of one-off Midjourney prompts. It came out of a production stack I architected, tuned, and run end-to-end. This page describes the stack at the level of shape and cadence. Specific prompts, exact thresholds, and skill contents stay internal. The architecture pattern is what matters here.

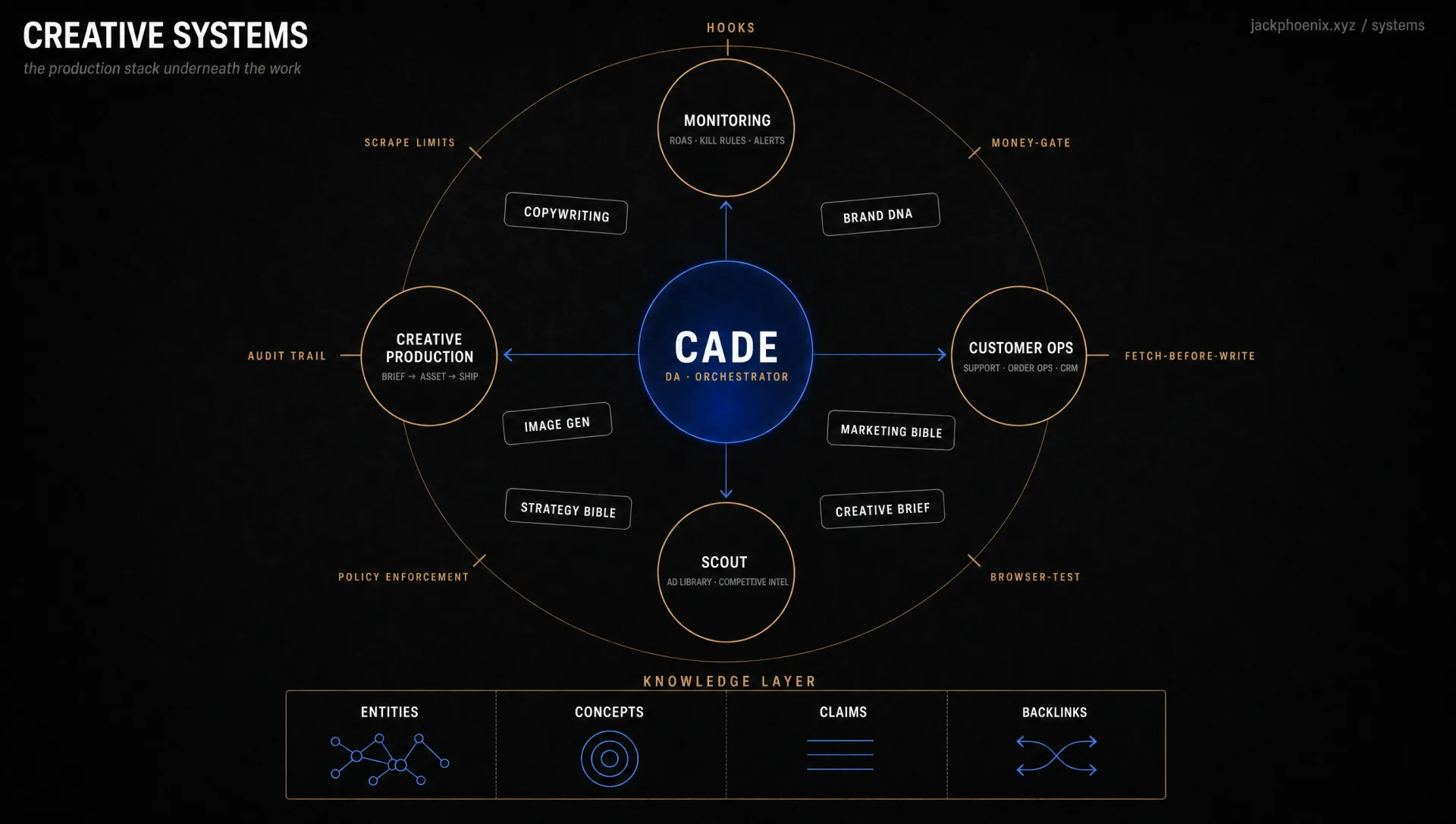

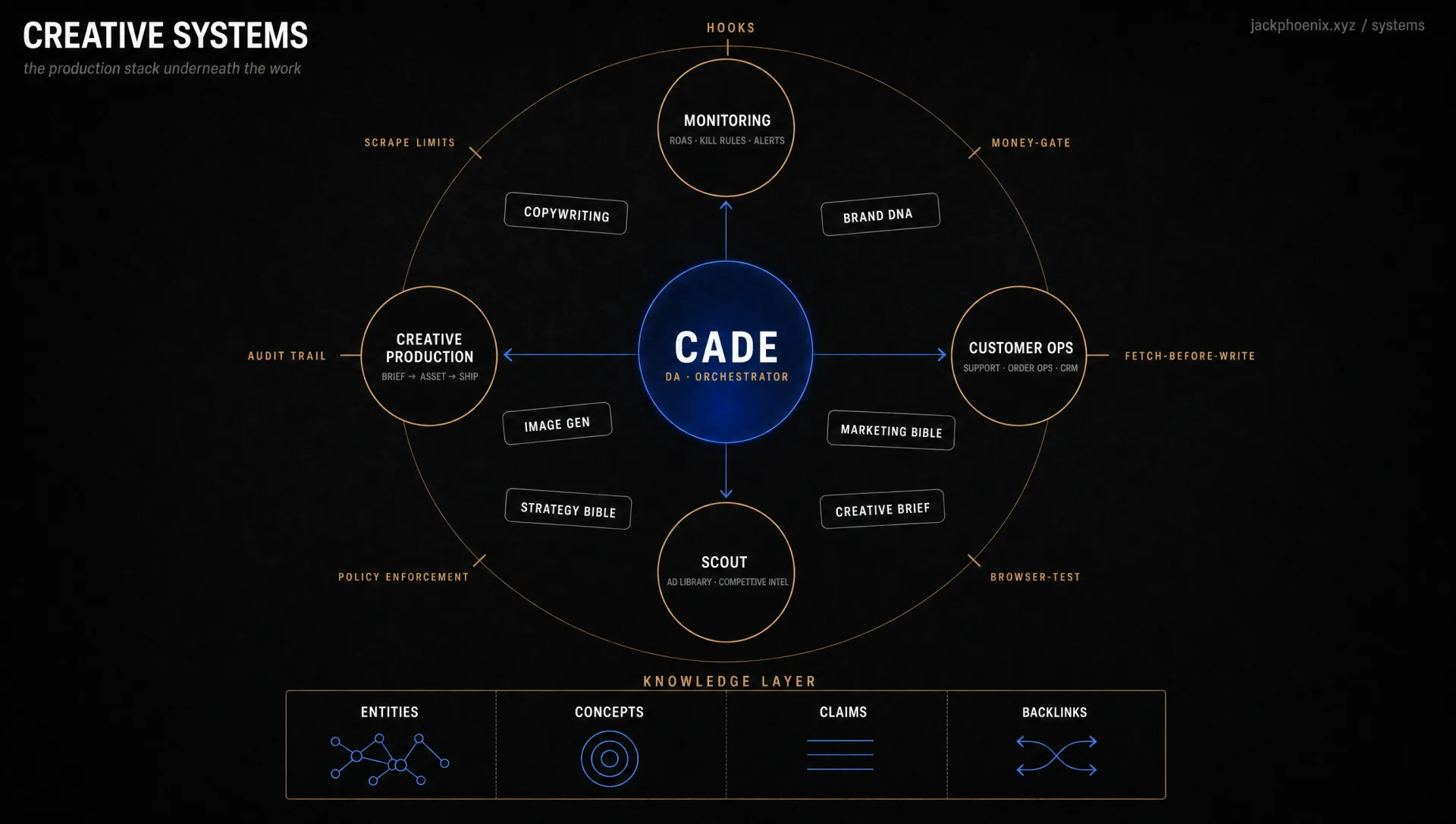

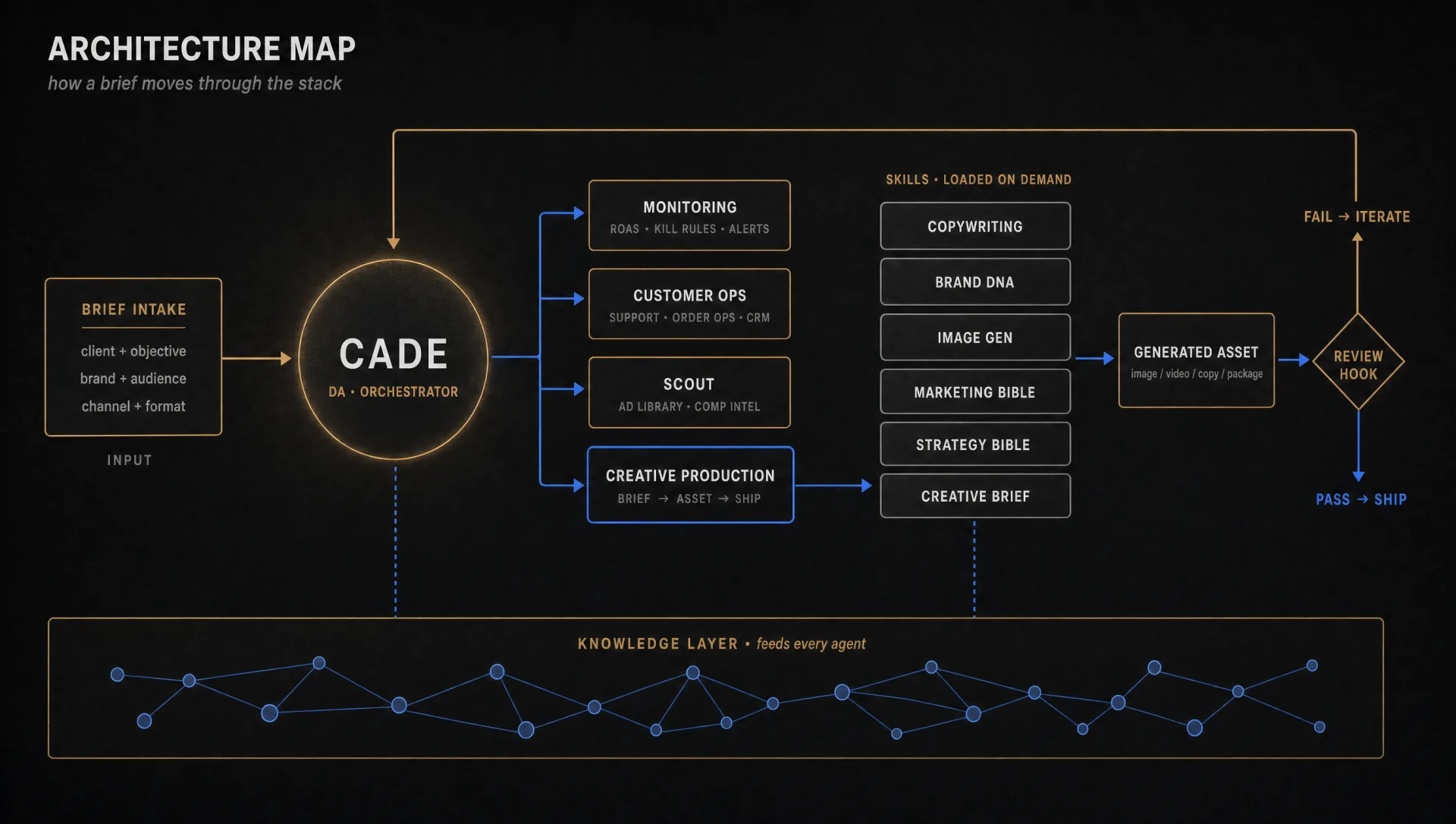

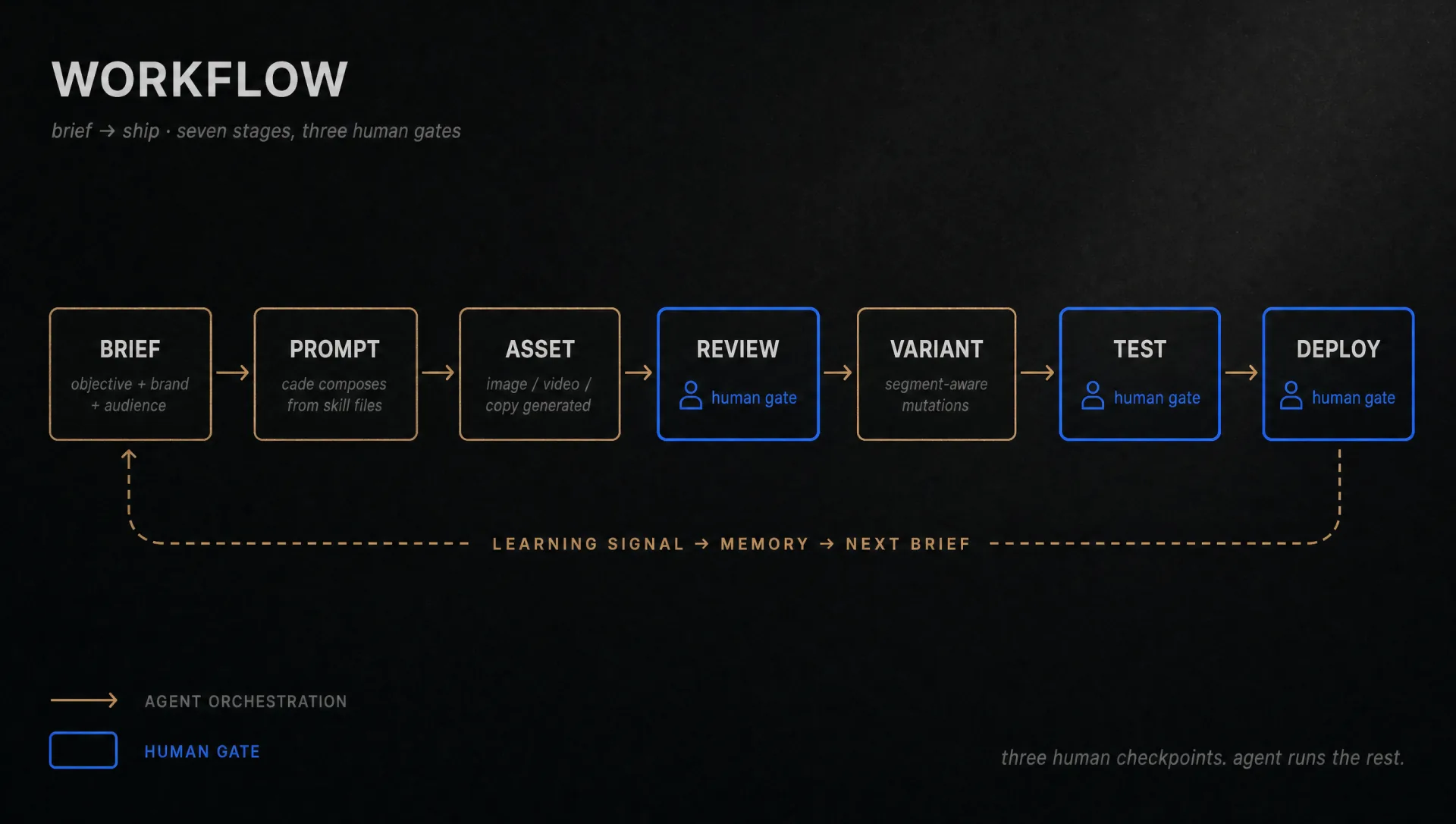

At the centre sits Cade, a digital assistant I've trained as a peer-grade collaborator rather than a tool. Cade reads a cron-scheduled briefing on every session start, flags anomalies, decides what's stale, and routes work between four dedicated sub-agents. Each sub-agent has its own scope: a monitoring agent watching paid-media performance against ROAS thresholds and triggering kill rules, a customer-ops agent handling multilingual support and order operations with response-time SLAs, a scout running ad-library sweeps and competitive intelligence, and a creative production agent taking briefs through to asset, review, and ship. Cade audits their output and surfaces decisions that need human eyes.

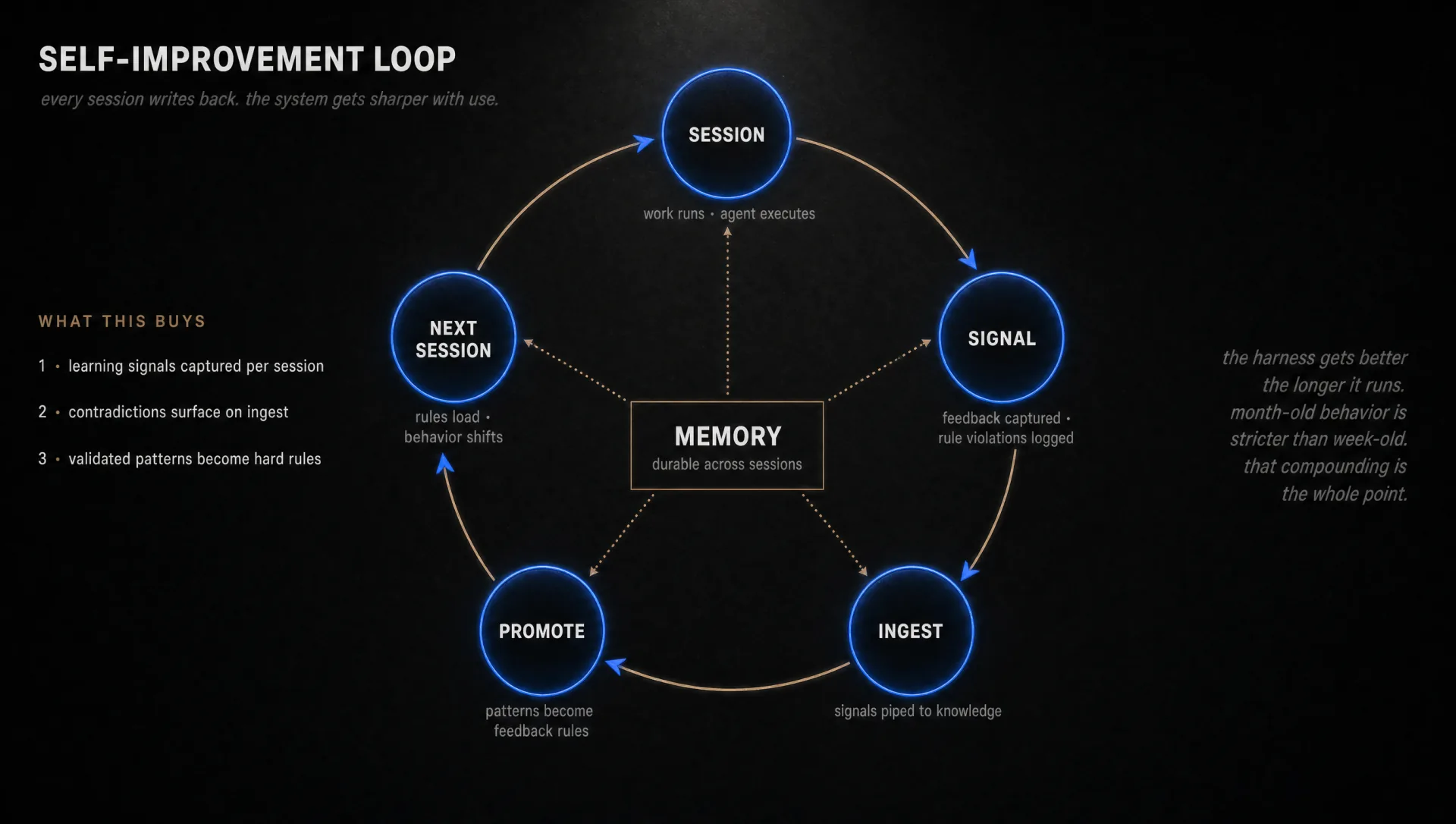

Underneath the agents sits a knowledge layer with entity, concept, and claim extraction with backlink maintenance. When something useful gets learned in a session, whether a new VOC pattern, a competitor angle, a winning hook archetype, or a contradiction with prior research, it gets ingested, cross-referenced against existing knowledge, and contradictions get flagged for resolution. The agents read from this layer rather than re-deriving context from scratch every session. Compounding context is the moat.

Skills are reusable system prompts living as discrete files. Each one encapsulates one workflow: a copywriting framework with a 7-method stack, a brand-DNA system, image-generation workflows for each surface and aspect ratio, codified marketing playbooks, strategy frameworks. Cade and the sub-agents load only the skills they need for the task at hand. New skills get authored when a pattern repeats three times. Old skills get challenged when their assumptions no longer hold.

A layer of constitutional rules wraps every tool call. The agents cannot spend money without explicit authorization in the current prompt. They cannot deploy a Shopify theme asset without fetching the live version first. They cannot claim a deploy works without browser-verifying the live URL. They cannot scrape platforms outside agreed boundaries. These rules are codified as hooks that fire on tool execution, not as suggestions in the prompt. They've blocked real incidents.

Every session writes back. Learning signals get captured during the work, ingested into the knowledge layer, and validated patterns get promoted into hard feedback rules. The next session loads those rules. The result is a stack that's stricter and sharper after a month than after a week. The compounding is the whole point. Most AI workflows decay because they're stateless. This one accumulates.

The architecture pattern is the same one larger AI-native retail holding companies are building at portfolio scale. The difference is volume, not shape. I run it across three brand surfaces with one operator. The pattern scales. Two ad films delivered out of one production system at roughly one-thirtieth the cost of an outsourced equivalent. The system itself is the deliverable as much as the work it produces.

This is portfolio-scale infrastructure built and run by one person. The production at three-brand scale is the proof. Deployment at thirteen-brand scale is the next operating step. The architectural pattern doesn't change. The team underneath does.